Step # 1: Mount an ISO file.

# mkdir -p /media/centos

# mount -o loop /dev/centos6X.iso /media/centos

Step # 2: Create repo config file on both the node.

# vi /etc/yum.repos.d/iso.repo

[ISO]

baseurl=file:///media/centos

enabled=1

Save and close the file

Step # 3: Stop firewall on both node.

#chkconfig iptables off

#chkconfig ip6tables off

Step # 4: Disable Selinux.

#vi /etc/selinux/config

disbale selinux

reboot (if you want)

Step # 5: Add hosts entery on both node.

#vi /etc/hosts

192.168.1.10 node1.clustering.com

192.168.1.11 node2.clustering.com (add this entery to both the node)

Step # 6: Create a ricci user & passwd.

#useradd ricci

#passwd ricci

Step # 7: Install Cluster software.

#yum install cman rgmanager luci ricci (on 1st node)

#yum install cman rgmanager ricci

Step # 8: Start service on both node

#service ricci start (on both the node)

Shutting down ricci:

Starting oddjobd: [ OK ]

generating SSL certificates... done

Generating NSS database... done

Starting ricci: [ OK ]

#service luci start (on 1st node only)

service luci restart

Stop luci... [ OK ]

Start luci... [ OK ]

Point your web browser to https://centos1.clsutering.com:8084 (or equivalent) to access luci

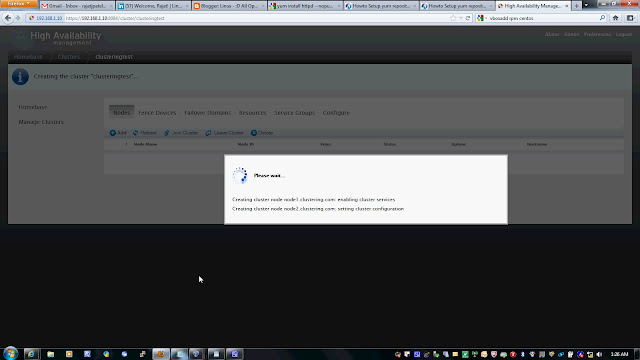

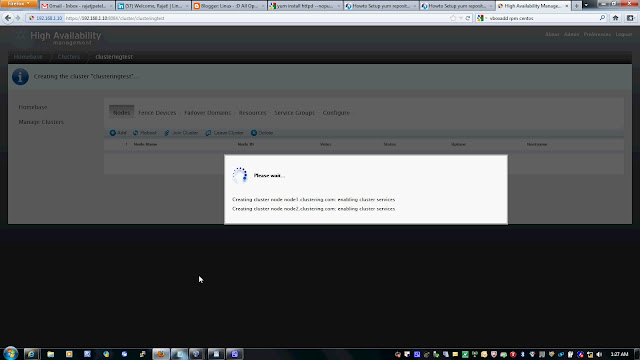

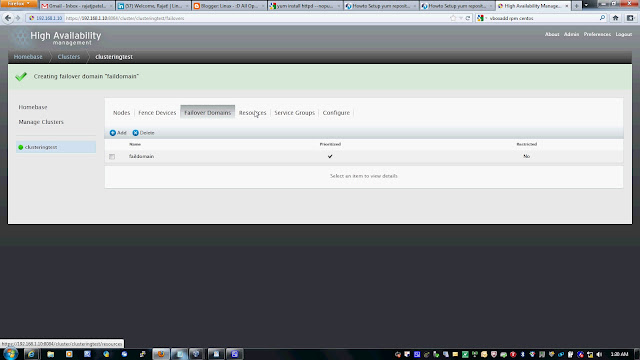

Step # 9: Open the firefox.

https://192.168.1.10:8084/

login as root & root passwd

Follow the snap short or you watch youtube to create a Cluster on following link

# mkdir -p /media/centos

# mount -o loop /dev/centos6X.iso /media/centos

Step # 2: Create repo config file on both the node.

# vi /etc/yum.repos.d/iso.repo

[ISO]

baseurl=file:///media/centos

enabled=1

Save and close the file

Step # 3: Stop firewall on both node.

#chkconfig iptables off

#chkconfig ip6tables off

Step # 4: Disable Selinux.

#vi /etc/selinux/config

disbale selinux

reboot (if you want)

Step # 5: Add hosts entery on both node.

#vi /etc/hosts

192.168.1.10 node1.clustering.com

192.168.1.11 node2.clustering.com (add this entery to both the node)

Step # 6: Create a ricci user & passwd.

#useradd ricci

#passwd ricci

Step # 7: Install Cluster software.

#yum install cman rgmanager luci ricci (on 1st node)

#yum install cman rgmanager ricci

Step # 8: Start service on both node

#service ricci start (on both the node)

Shutting down ricci:

Starting oddjobd: [ OK ]

generating SSL certificates... done

Generating NSS database... done

Starting ricci: [ OK ]

#service luci start (on 1st node only)

service luci restart

Stop luci... [ OK ]

Start luci... [ OK ]

Point your web browser to https://centos1.clsutering.com:8084 (or equivalent) to access luci

Step # 9: Open the firefox.

https://192.168.1.10:8084/

login as root & root passwd

Follow the snap short or you watch youtube to create a Cluster on following link